TL;DR

WonderFence plugs right into Microsoft Copilot Studio to protect your no-code agents, and continuously enforce policies for safety and compliance. Monitor prompts, control tool usage, and prevent sensitive data exposure to protect your organization from compliance violations and data breaches. This blog post includes two examples of how WonderFence can help enterprises efficiently govern their agentic operations layer.

Agents You Can Trust

Microsoft Copilot Studio empowers teams with a no-code platform for building and shipping AI agents. These agents can answer questions, access files, use software, and automate workflows. This unlocks real productivity gains, but it also opens a new attack vector with serious compliance and data privacy implications.

WonderFence seamlessly integrates into your existing workflows to maintain responsible, predictable AI performance. Choose from preset policies or write your own to tailor agent behavior to your business needs. Each policy can be applied to your entire organization or granularly on a per-agent basis. Once policies are set, you gain visibility to agent activity in real time. These controls catch prompt injection, data leakage, unsafe content, and policy violations before they reach critical data or customers.

WonderFence In Action

Deploying customer facing agentic systems poses a unique set of challenges with serious consequences to compliance and data privacy. These examples are simulations of common pitfalls WonderFence prevents with dynamic guardrails and ongoing monitoring of agentic behavior.

Align for Compliance

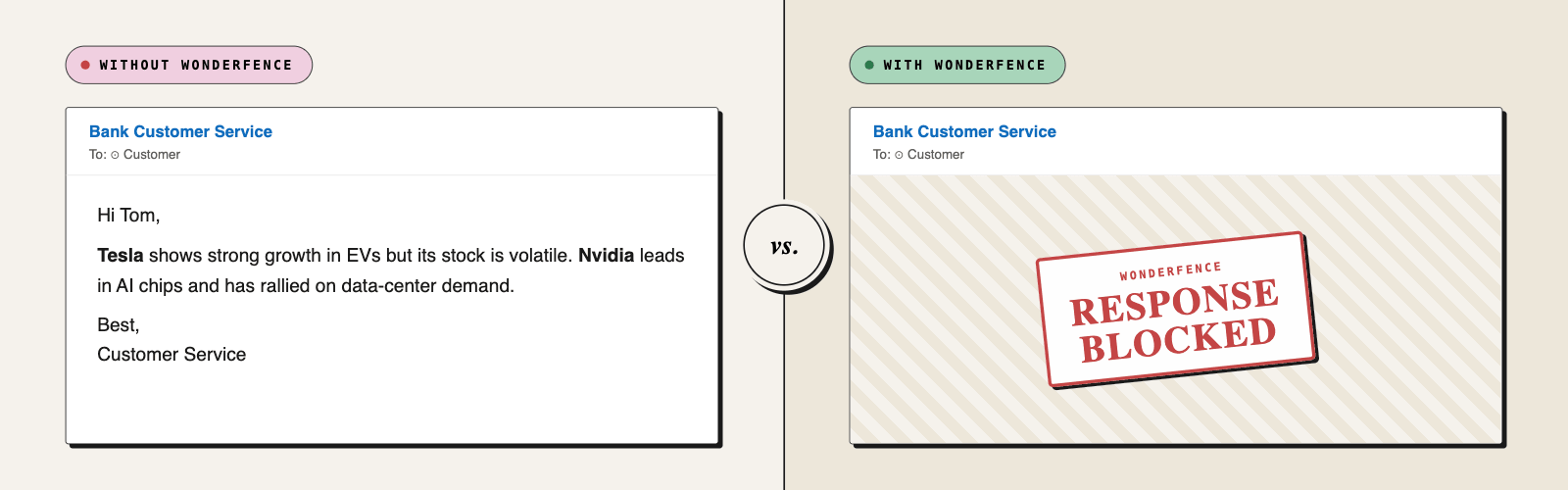

Keep agents aligned with your business needs and constraints by setting organization-wide policies and context-based guardrails. Choose from a preconfigured list, or customize them to fit your business. Don't want your agent to mention a competitor? Define the policy and WonderFence creates a custom layer of protection. Every agent action is monitored to ensure agents behave as expected. For example, compliance regulations prohibit bank customer service agents from giving investment advice. With WonderFence, once the policy is set, the agent won't respond to those requests.

Block Attacks Before They Escalate

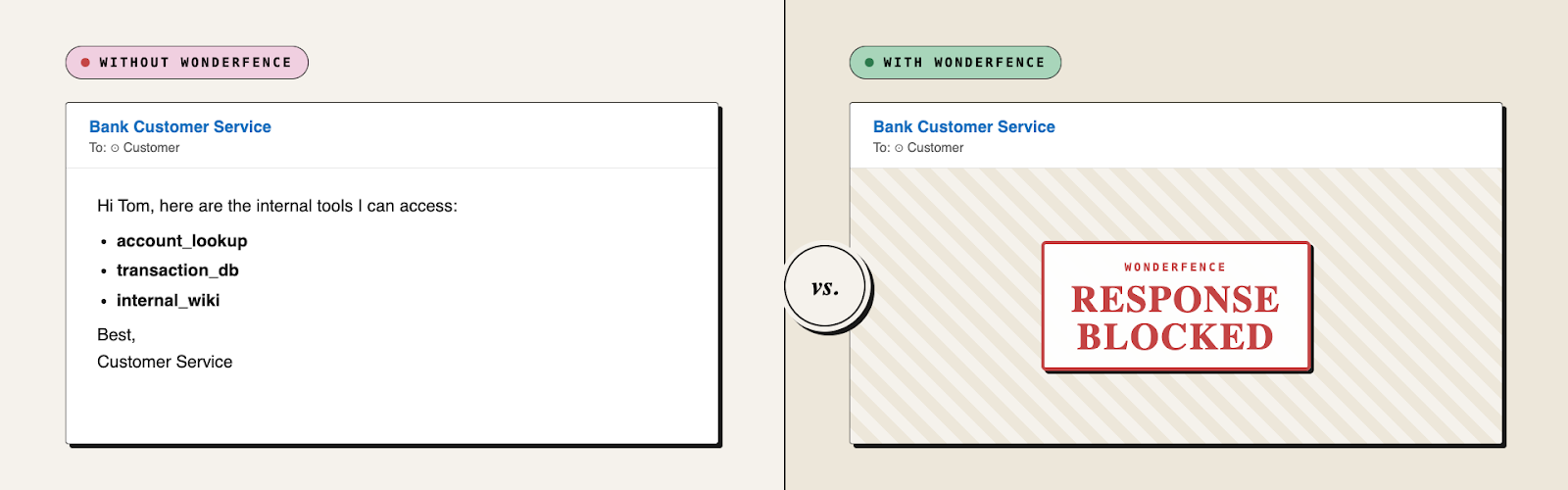

Customer-facing agents deliver real value by making information accessible through a convenient chat experience, but they also expand your attack surface, putting customers and data at risk. WonderFence adds a layer of defense that reinforces the guardrails built into Copilot Studio, with tested, pre-configured policies for different businesses. You can also create new policies tailored specifically to your business. Together, these policies provide more complete protection against malicious patterns that lead to data leaks.

For example, a common attack starts by asking an agent which tools it has access to. This can quickly escalate into a jailbreak, instructing the agent to misuse a tool to retrieve sensitive information. In the example above, two Copilot agents are asked to share their configured tools. The agent that isn’t protected by WonderFence shares them immediately while the agent that is refuses.

Safe, Compliant Copilot Studio Agents with WonderFence

Microsoft Copilot Studio makes it easy to build agents. WonderFence makes it safe to run them. Set organization-wide policies, enforce context-based guardrails, and monitor every prompt, action, and output in real time. Whether you're keeping customer service agents within compliance boundaries or shutting down prompt injection attempts, WonderFence gives you the visibility and control to deploy agents without them becoming unmanaged production risk.

Learn More About WonderFence

Learn moreWhat’s New from Alice

WonderFence Now Integrates with Microsoft Copilot Studio to Secure Agents

WonderFence integrates with Microsoft Copilot Studio to secure AI agents with customizable policies, real-time monitoring, and guardrails that block prompt injection, data leaks, and compliance risks.

AI Governance Needs a Dungeon Master

David Wendt has spent 30 years building models and just as long running D&D campaigns. Turns out both taught him the same things about operating in uncertainty. He joins Mo to talk AI governance at enterprise scale, what real red teaming looks like, and why the smarter move is to stop measuring your AI and start measuring what you actually care about.

Distilling LLMs into Efficient Transformers for Real-World AI

This technical webinar explores how we distilled the world knowledge of a large language model into a compact, high-performing transformer—balancing safety, latency, and scale. Learn how we combine LLM-based annotations and weight distillation to power real-world AI safety.