Adopt AI Without Compromising Confidentiality or Professional Responsibility.

Legal AI operates where privilege, professional ethics, and personal practitioner liability intersect. Mitigate the confidentiality, research integrity, and professional responsibility risk AI introduces from pre-deployment through production, in one platform.

Legal AI Risks Don't Stay Inside the Firm.

Legal AI operates on privileged communications, confidential strategy, and client data. A failure in that environment doesn't generate a support ticket. It generates a bar complaint, a malpractice claim, or a breach that a client finds out about before the firm does.

Legal Research & Drafting AI

Evaluate AI-generated research, briefs, and contracts for hallucinated citations and misrepresented precedent before they reach a client or a court. Courts are sanctioning attorneys for AI errors their review process failed to catch.

Client-Facing & Advisory AI

Enforce privilege and scope boundaries across every client interaction in real time. An AI that discloses confidential information or creates an unintended attorney-client relationship does so before any human reviews the output.

e-Discovery & Document Review

Test document review AI against privilege leakage and adversarial manipulation before it processes matter-sensitive materials. A single inadvertent disclosure in litigation can be case-dispositive and irreversible.

Agentic Legal Workflows

Monitor AI agents operating across matters, clients, and jurisdictions. Conflicts of interest and privilege contamination compound at every step and rarely surface until a client or a court notices something wrong.

We've seen the worst.

So your clients don't have to.

Rabbit Hole is the adversarial engine behind WonderSuite. Built on a decade of global trust and safety research and billions of real-world adversarial and manipulative samples, instead of only synthetic data, so that you can deploy legal AI with confidence that your system has been tested against the threats it will actually face not the ones someone imagined in a lab.

One Platform. Every Lifecycle Stage.

Built For Legal Ethics Rules and Frameworks.

ABA Formal Opinion 512

ABA Formal Opinion 512 addresses lawyer obligations when using generative AI, including competence, confidentiality, and supervision requirements. Firms must understand how their AI tools work, protect client data, and review AI outputs before relying on them. WonderSuite provides controls and audit evidence that demonstrate those obligations are being met.

Model Rule 1.6

Model Rule 1.6 requires lawyers to protect confidential client information from unauthorized disclosure. Legal AI that processes client communications, case strategy, or privileged documents creates new vectors for that information to appear in outputs or be transmitted unintentionally. WonderFence detects and redacts confidential data at the interaction layer before it moves somewhere it shouldn't.

EU AI Act

The EU AI Act's high-risk classification extends to AI used in legal research, document review, and access to justice applications. Firms operating in the EU must demonstrate conformity assessment before deployment, ongoing monitoring in production, and technical documentation across the AI lifecycle. WonderSuite provides the testing, runtime oversight, and periodic evaluation records the Act requires.

Your Frameworks and Policies

Easily create custom controls that map to any internal or regulatory policies and enforce them across your full AI lifecycle, giving you the flexibility to maintain compliance with virtually any framework or regulation.

Why Legal Teams Partner with Alice

Alice has spent a decade mapping how bad actors operate. That intelligence is built into every evaluation, every guardrail, and every detection model we ship. Here are some other reasons Alice is right for you:

Privilege Boundary Enforcement

Detect and block AI outputs that disclose confidential matter information, create unintended attorney-client relationships, or breach scope boundaries before they reach a client.

Research Integrity Control

Identify hallucinated citations, misrepresented precedent, and inaccurate legal analysis in AI-generated research and drafts before they reach a practitioner, a client, or a court.

Defensible Governance on Demand

Generate the audit trails and evidentiary documentation your firm needs for bar ethics disclosures, client assurance conversations, and internal risk oversight built into every interaction from day one.

Pre-Deployment Validation

Red team legal AI before launch against adversarial scenarios specific to privilege, confidentiality, and research integrity. Know what fails before any matter data enters the system.

Production Drift Detection

Monitor how legal AI behaviour changes after model updates and prompt changes. Catch regressions before they introduce new privilege risks or produce outputs that wouldn't survive bar scrutiny.

Flexible Deployment

Meet data residency, security, and jurisdiction-specific compliance requirements without slowing AI rollout. Deploy on-premises or in the cloud, whichever your firm or clients require.

Questions Legal Service Provider Teams Ask Us

Our attorneys already review AI outputs before anything goes to a client or a court. Why do we need additional controls?

Review catches many failures. It doesn't catch all of them, not consistently, and not under time pressure. The Mata v. Avianca sanctions happened despite attorney sign-off. Systematic controls are not a replacement for judgement, but coverage for the conditions under which judgement fails. The duty of competence under Rule 1.1 requires understanding how your tools fail, not just reviewing outputs after the fact.

How does WonderSuite protect privilege in AI systems that touch multiple matters?

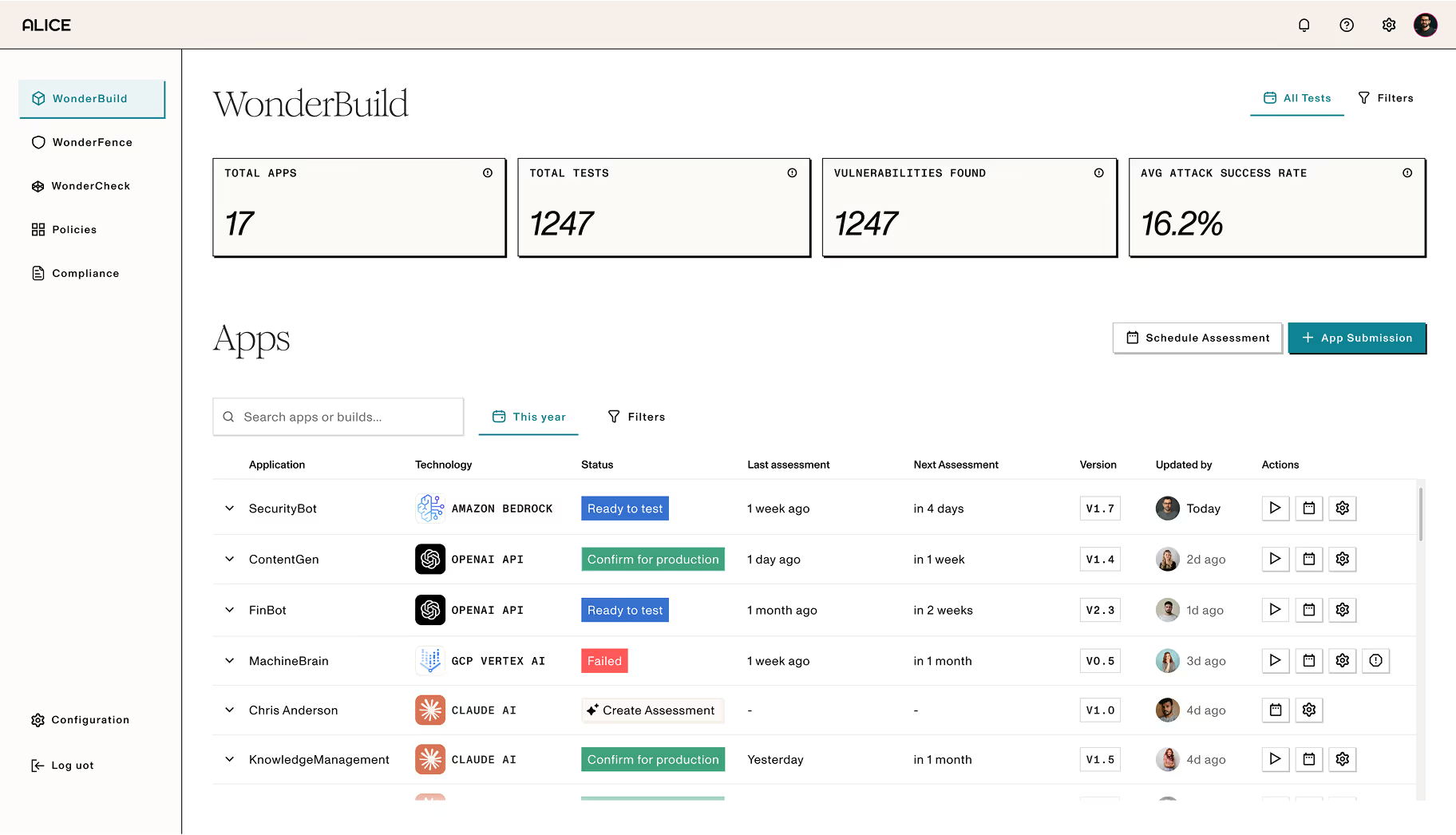

Privilege contamination in AI is structural, not incidental. A model operating across matters can surface information from one client context in another in ways that don't announce themselves in the output. Existing information barriers weren't designed for this. WonderFence enforces matter-level boundaries, while WonderBuild tests for cross-matter leakage before any client data enters the system.

Our AI operates across multiple regions and languages. Can WonderSuite handle that?

The best approach is governance infrastructure that's jurisdiction-aware from the start: controls that apply differently based on practice area, matter context, and where the work is being done. WonderSuite covers 120+ languages with native speaker-level nuance, including regional and cultural context so your AI system can support whatever the jurisdiction requires.

A client just asked us to demonstrate how we govern AI use in our practice. What do we show them?

WonderSuite generates the structured record, pre-deployment test results, production monitoring logs, privilege boundary configurations, and policy alignment evidence that allow you to meet that burden of proof.

Implement Real-Time AI Governance.

See how WonderBuild, WonderFence, and WonderCheck work together to protect privilege, eliminate hallucination risk, and keep legal AI defensible across its full lifecycle.

See WonderSuite in Action