Launch Child-safe AI

Without Compromising Regulatory Controls.

Govern AI responsibly across the full product lifecycle, from pre-deployment testing through production, in one platform built for the risks child-facing products carry.

AI Risks in Child-Facing Systems Impact the Most Vulnerable.

AI opens new possibilities for how children learn and engage. Building safely with it means understanding the child-specific risks before systems reach production.

Child-Facing Chatbots

Harden child-facing chatbots against content safety failures and manipulation vectors before they go live. The inputs children provide may not match what your team anticipated in development.

Grooming & Exploitation Detection

Build detection for grooming patterns and exploitation attempts into your platform before launch. Conversational AI gives bad actors new vectors to operate at scale. WonderSuite's controls are designed for that reality from the ground up.

Agentic AI Workflows

Test child-facing AI agents against jailbreak attacks, data exfiltration attempts, and multi-step manipulation chains before they reach children. Monitor continuously as regulatory requirements evolve.

Data & Privacy Management

Keep AI governance consistent across COPPA, GDPR, and other global child-safety regulations at every interaction and channel. Maintain evidentiary audit trails and keep operations within policy.

We've seen the worst.

So children don't have to.

Rabbit Hole is the adversarial engine behind WonderSuite. Built on a decade of global trust and safety research and billions of real-world adversarial and manipulative samples, instead of only synthetic data, so that your teams can launch child-facing AI with confidence that your system has been tested against the threats it will actually face.

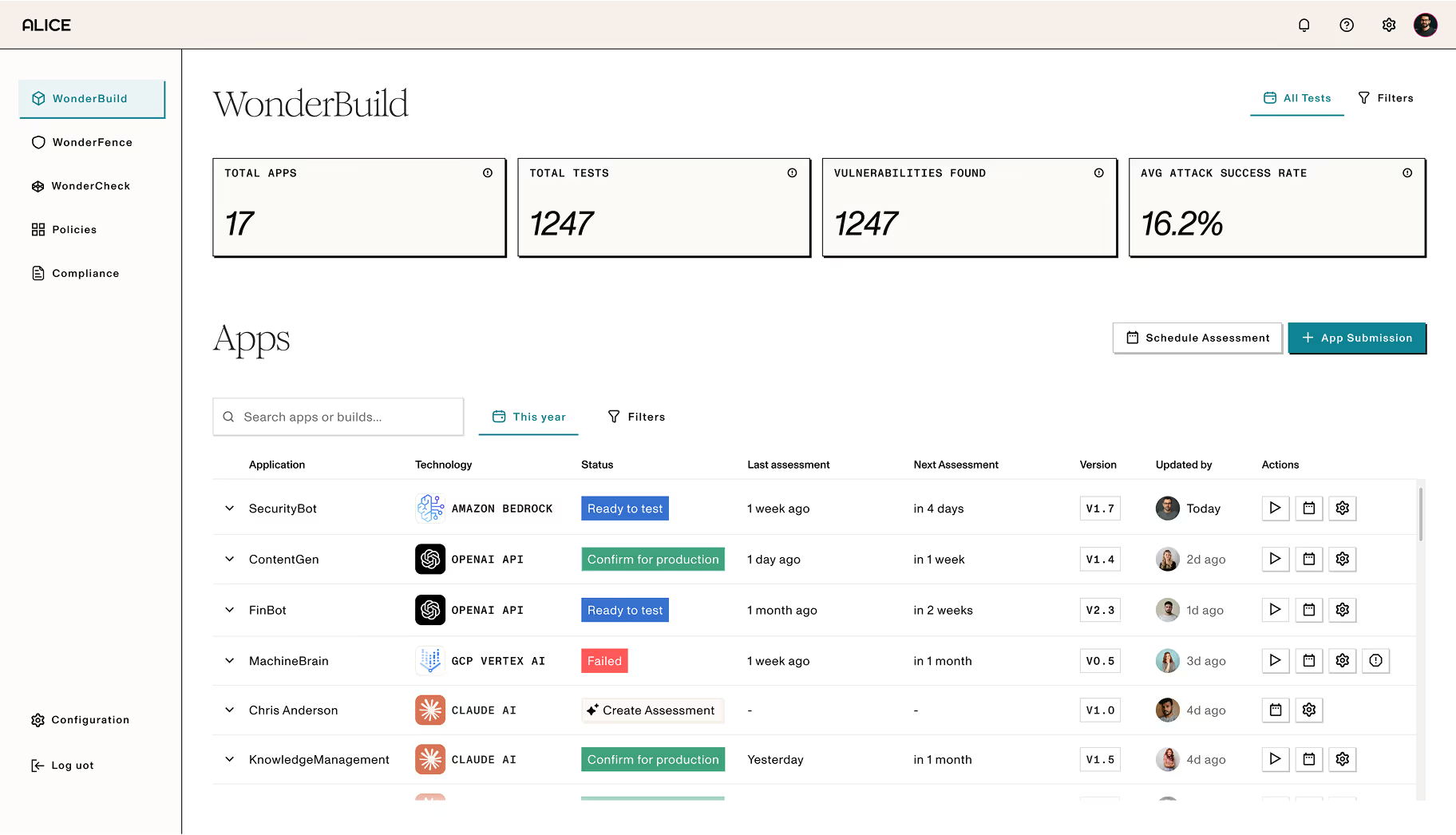

One Platform. Every Lifecycle Stage.

Built For Child Safety Regulations and Frameworks.

COPPA

The Children's Online Privacy Protection Act (COPPA) governs how platforms collect and handle personal data from children under 13. Conversational AI collects personal information through dialogue whether the platform intends it or not. WonderBuild tests for collection risks before launch, and WonderFence detects and redacts that data in real time.

DSA

The Digital Services Act (DSA) requires platforms to assess and mitigate risks to minors, including those introduced by algorithmic systems and AI-driven content. Alice provides the runtime controls and evidentiary logging platforms need to demonstrate compliance.

KOSA

When the Kids Online Safety Act (KOSA) passes, platforms will be required to prevent and mitigate harms to minors, including exposure to harmful content and manipulative design patterns. Alice provides the pre-deployment testing and runtime controls platforms need to demonstrate that safeguards were built in from the start.

GDPR Article 8

GDPR Article 8 sets a higher bar for processing children's data. Consent requirements, data minimisation obligations, and purpose limitation apply with particular force where minors are involved. WonderSuite's PII detection and data governance capabilities help ensure your AI systems process children's data lawfully, with full audit trails for regulatory review.

Your Frameworks and Policies

Easily create custom controls that map to any internal or regulatory policies and enforce them across your full AI lifecycle, giving you the flexibility to maintain compliance with virtually any framework or regulation.

Why Child-Facing Product Providers Partner with Alice.

Alice has spent a decade mapping how bad actors operate. That intelligence is built into every evaluation, every guardrail, and every detection model we ship. Here are some other reasons Alice is right for you:

Safe, Multi-Modal Output Enforcement

Detect age-inappropriate, harmful, or exploitative AI outputs before they reach children across chat, voice, and agentic interactions.

Children's Data Protection at the Interaction Layer

Identify and redact children's personal information in real time across every child-facing and internal AI interaction, in line with COPPA and GDPR Article 8.

Regulatory Evidence on Demand

Generate the audit trails and evidentiary logs your compliance team needs for COPPA, KOSA, the DSA, and the UK Age Appropriate Design Code, built into every interaction from day one.

Pre-Deployment Validation

Red team child-facing AI before launch against adversarial scenarios specific to minor-safety and strengthen failure points before children encounter them.

Production Drift Detection

Monitor how child-facing AI behavior changes after model updates and prompt changes. Catch regressions before they introduce new risks or trigger a regulatory finding.

Flexible Deployment

Meet data residency, security, and compliance requirements without slowing AI rollout. Deploy on-premises or in the cloud, whichever your organization requires.

Questions Child Safety Teams Ask Us

How does WonderSuite handle agentic AI systems in child-facing environments

WonderSuite is designed for the full range of GenAI deployment types; including agentic systems that take actions beyond answering questions. For child-facing environments, this means real-time monitoring of multi-step AI behaviour, not just individual outputs, with age-aware policies enforced at every decision point regardless of the underlying model.

We're concerned about latency. Will WonderSuite slow down our child-facing AI?

WonderSuite is built for production-grade performance. Our safety and monitoring layer is designed to operate without perceptible impact on response time — a critical requirement for consumer platforms serving children, where latency directly affects user experience and engagement.

Our platform operates across multiple regions with different child safety laws. Can WonderSuite handle that?

Yes. WonderSuite supports jurisdiction-aware policy enforcement, different rules apply based on where a user is located. COPPA, UK Age Appropriate Design Code, Australian Online Safety Act, KOSA, and EU DSA requirements can all run simultaneously across a single deployment, with audit trails scoped by jurisdiction.

How does WonderSuite address grooming and exploitation risks in AI-generated interactions?

Alice's body of adversarial intelligence includes data points specific to child exploitation threat categories, including grooming patterns, coercive language sequences, and CSAM-adjacent generation attempts. WonderSuite also generates the documentation required for mandatory reporting obligations under applicable child-safety laws globally.

Implement Real-Time AI Governance.

See how WonderSuite brings together pre-deployment stress testing, real-time content protection, and production evaluation for child-facing AI.

See WonderSuite in Action